Minimum Viable Context: Right Content, Right Time, Right Token Budget

The Minimum Viable Context is the goal: the right context, at the right time, with the right token budget. This is urgent as enterprises move from a handful of copilots to thousands of agents operating in parallel. What’s missing is a disciplined way to compile context from reusable building blocks—while elevating policy and decision boundaries as first-class citizens.

Introduction

The context window—the fixed slice of text (instructions, facts, retrieved material, intermediate results) a model can “see” at once—is now the scarcest and perhaps most consequential resource in AI systems. Today, it is probably fair to say it is also managed poorly. And the result is probably predictable: at times large language models confidently produce uneven and sometimes outright unpredictable outcomes results. Why? Not because the enterprise lacks knowledge, but because the decisive constraints weren’t loaded into the window at the moment a decision was made.

The hard lesson the industry seems to have learned is that context engineering is not primarily an information-retrieval problem. Instead, context engineering is an end-to-end memory management problem with a hard token budget.

Agents raise the stakes. According to some industry leaders, “there will be billions” of them inside a single enterprise – so, even if they are far off in their predictions and there are only millions or perhaps thousands of them in every firm – this means small context failures get amplified into systemic ones. And because agents act—executing plans, calling tools, updating systems—a missing constraint doesn’t just degrade an answer; it becomes a real-world side effect.

Now, the twist, or perhaps opportunity, is that agents are also in the execution path, which means they can capture decisions – effectively the digital exhaust from logging agent activities combined with some instrumentation – about how the business actually runs. And it can use these activity logs (sometimes called “decision traces”) to create a feedback loop letting agents learn from past successes (and failures).

What we really need is a way to capture the Minimum Viable Context that delivers to the context window the right concepts at the right time at the right token budget. We build out our MVC around a combination of existing and emerging ideas as well as new and innovative ones:

Concept cards, built from knowledge graphs and ontology foundations, that capture meaning and “senses”, but also assign token budgets associated with their data.

Policy cards, that capture “how a business runs” – its decision boundaries, rules, exceptions, and “if-then” logic that are enterprise embedded in all business processes.

Context compiler, that dynamically decomposes a user request into concept and policy cards, acquires data optimized for a required token budget, and serves it for an agent’s LLM context window.

Feedback loop, that captures agent exhaust as outputs and compares them to inputs created by the context compiler to create building blocks for a powerful feedback mechanism to help agents learn.

Virtual Context Manager, that manages the end-to-end process from concept and policy card creation, context compilation, and the feedback loop.

This article lays out the architecture and the discipline for these components. For a full in-depth review of these concepts and the architecture, take a look at our YouTube video.

Problem Statement

The brain for agents – its LLM – is frozen at the time of training. The agent (actually its LLM) knows nothing about an enterprise’s private data. To provide information specific to the enterprise, we have landed on a a technique called retrieval-augmented generation (RAG): index the corpus, chunk documents into smaller pieces (often in a vector database or a knowledge graph), retrieve the “most relevant” passages, and paste them into the context window.

The core limitation is that chunking can be arbitrary: it breaks coherent source material into fragments that lose the original logic, decision boundaries, and intent. Even when retrieval lands near the right place, the returned chunks are often incomplete—missing the surrounding definitions, dependencies, and qualifiers that make a constraint operative—so the model is forced to infer what should have been explicit.

To address this shortcoming, teams naively respond by adding more chunks. But then the system can’t see the forest for the trees as attention skews toward the edges, key thresholds and exceptions get buried in clutter, and the model behaves as if the rules weren’t present. The core problem remains: the most relevant rules and policies that actually run the enterprise – exceptions, thresholds, if-then branches, and regime boundaries – are lost.

From a practical perspective, we find that relevance is conditional on intent and role; the same question asked by a compliance analyst versus an operations analyst implies different obligations, not just different facts. In effect, relying on chunk retrieval tends to lead to concept overlaps, producing answers that sound coherent yet are operationally wrong.

The Minimum Viable Context

What we really need is to deliver a Minimum Viable Context (MVC) whose definition is quite simple: the right context, at the right time, at the right token budget:

Minimum. Our objective is to have the smallest working set in the context window that still lets the agent work correctly. The size constraint is practical: the context window has a hard token budget, and loading additional material consumes capacity that may unnecessarily consume this scarce resource (and it will increase costs).

Viable. A viable working set for a context window includes the meanings (concepts) that must be pinned and the governing constraints (policies) that must be applied, including any exceptions and required inputs needed to execute those constraints. Viability is assessed under the same hard token budget: if the necessary constraints and bindings cannot fit, the system should treat that as a shortfall that requires verification, additional inputs, or escalation before acting.

Context. Working under a hard token budget, the context is a dynamic compilation of concepts and associated acquired data, policy information, and system information (tools, etc) that is served into the model’s context window.

Concept cards

As we have pointed out, the MVC is built upon a foundation of related components. Content cards are the quantum of disambiguated, token budget aware, knowledge, in the same spirit as old-style library cards that cross-referenced books by title, author, and subject to make retrieval precise and repeatable. Here, a concept card is simply a small YAML/JSON record that conforms to a strict JSON schema, so it can be validated, indexed, diffed, and assembled deterministically.

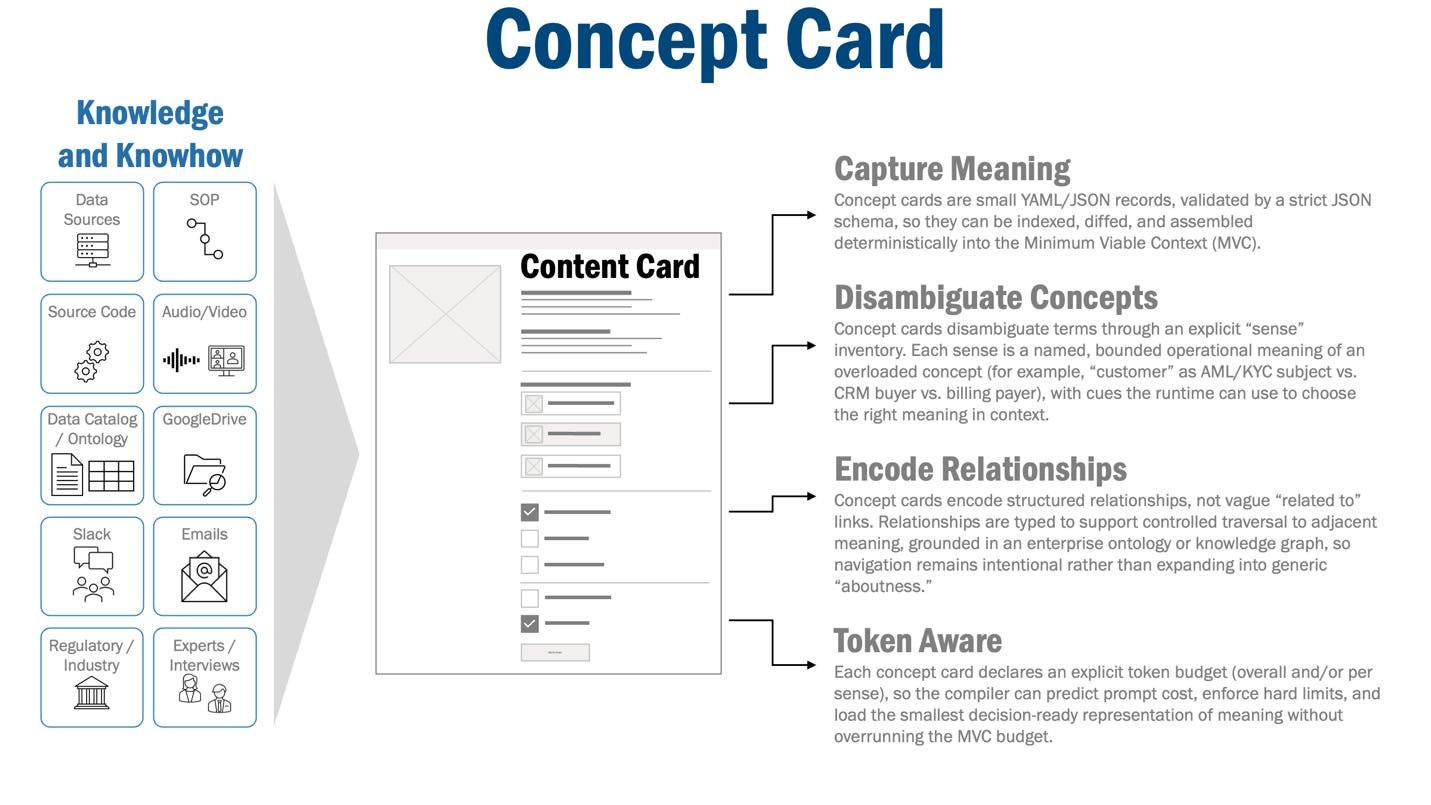

Figure 1 - Concept Card

A concept card captures meaning. Its job is to provide a stable, governed way to say what a term means in this enterprise. Concept cards include a stable identity (card ID and canonical name), an owner (the domain authority for that meaning), and a sense inventory, relationships to other concepts (cards), provenance, and data acquisition plans.

Senses are particularly important as the disambiguate complex or overloaded concepts. A sense is a discrete operational meaning of an overloaded term—an explicit “which meaning do we mean here?” option that the runtime can select.

“Customer,” for example, is a broad and almost intuitive topic, but it has different senses depending on its specific usage: in an AML/KYC workflow it may mean the screened subject; in sales it may mean the buyer or account in a CRM; in billing it may mean the paying account in a revenue system.

So, each sense is named, bounded, and anchored to enterprise usage: the identifiers it uses, the system(s) of record it maps to, and the cues the runtime can use to disambiguate (role, workflow stage, channel, jurisdiction, required fields).

Concept cards also capture relationships to other concepts, so navigation stays controlled and intentional. Instead of vague “related to” links, relationships are typed to express operational structure so the concepts can be traversed to adjacent meaning without expanding into generic “aboutness.” To do this, concept cards are built on a foundation of enterprise ontology or knowledge management (knowledge graph) systems.

Finally, concept cards are also operational by containing:

Provenance, citing exact source spans (document sections, table rows, tickets, PRs, transcript turns) with version metadata so meanings can be audited and updated safely

Data acquisition instructions, encoding how to acquire the underlying data for each sense—what system to query, which identifiers/fields to use, and what evidence is required—so “meaning” is not just descriptive text but a usable pointer to the enterprise data needed to apply policy correctly.

Policy Cards

Concept cards pin meaning (which entity and which attributes are in play); policy cards pin constraints (what rules apply and what actions they require); and together they let the runtime assemble a step-specific working set under a hard token budget.

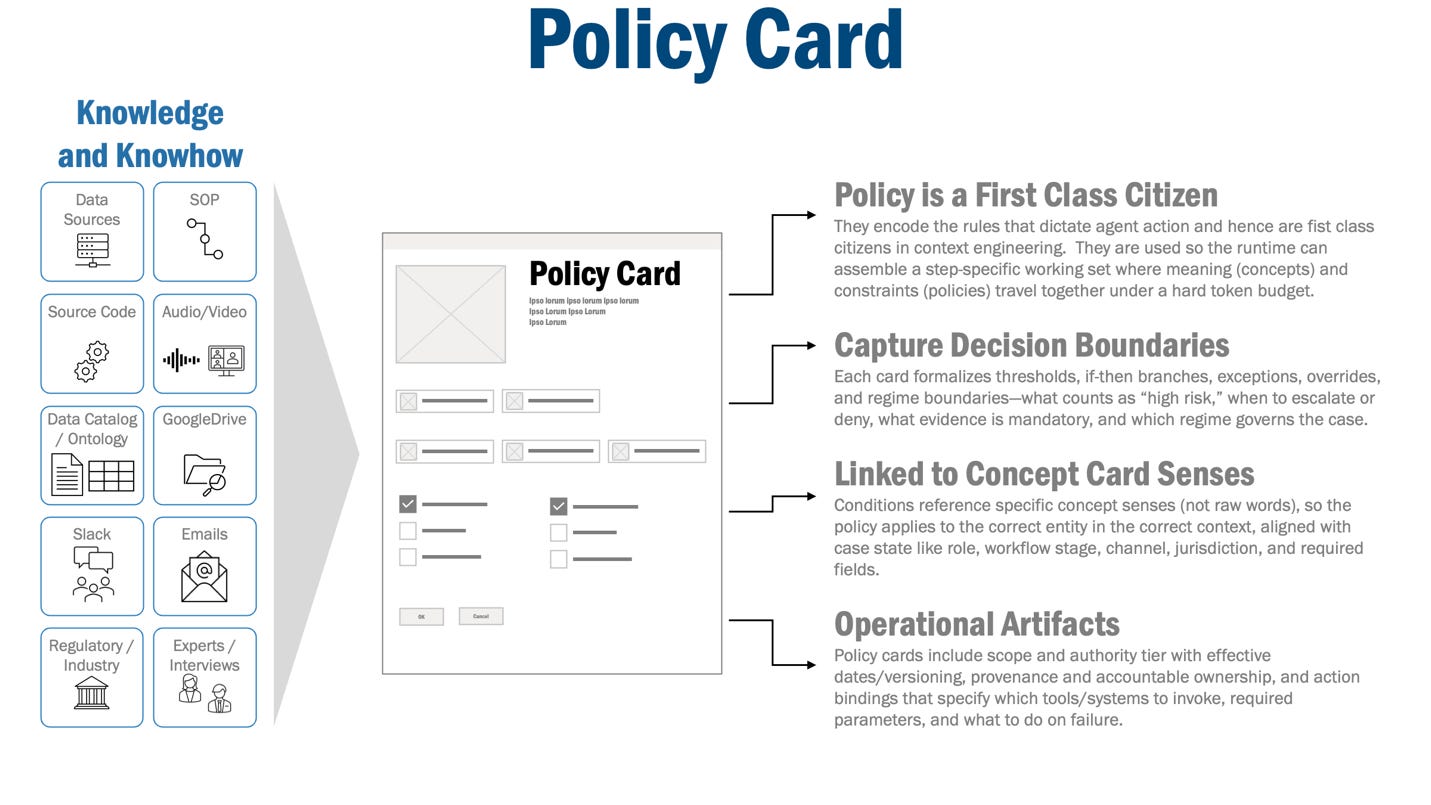

Figure 2 - Policy Card

Policy cards are a key differentiator from approaches that focus primarily on content and concepts. In our approach, policies (cards) are a first-class citizen in context engineering.

Policy cards capture decision boundaries, the parts of enterprise knowledge that determine outcomes. They include thresholds, if-then branches, exceptions, overrides, and regime boundaries—what counts as “high risk,” when to escalate, when to deny, what evidence is mandatory, and which policy regime governs this case. They matter because they are the difference between a system that is merely informative and one that is operational: if an agent misses a boundary, it can take the wrong branch with high confidence and produce a real side effect.

Policy cards rely on concept cards to make their conditions unambiguous. Conditions reference concept card senses rather than raw words, so “customer” in an AML context can be bound to the screened subject concept card sense, while “customer” in sales can be bound to the account sense, and the policy applies to the correct entity. This is also where case state matters: concept sense selection is informed by role, workflow stage, channel, jurisdiction, and required fields, and the policy card’s scope must match the same state.

A policy card is a compact record of a decision boundary and the metadata needed to apply it. Representative fields include:

Scope (jurisdiction, product, channel, lifecycle stage), authority tier (policy, procedure, guidance, workaround), and effective dates/version (what is in force now).

Structured decision boundaries, including conditions (what must be true), outcomes/obligations (what must be done), and exceptions/overrides (what changes the default).

Provenance and ownership, that link the policy back to accountable owners and originating sources (documents, attributes, systems of record, standard operating procedures etc).

Action bindings, including which tool/system to call, required parameters, and what to do on failure.

The Context Compiler

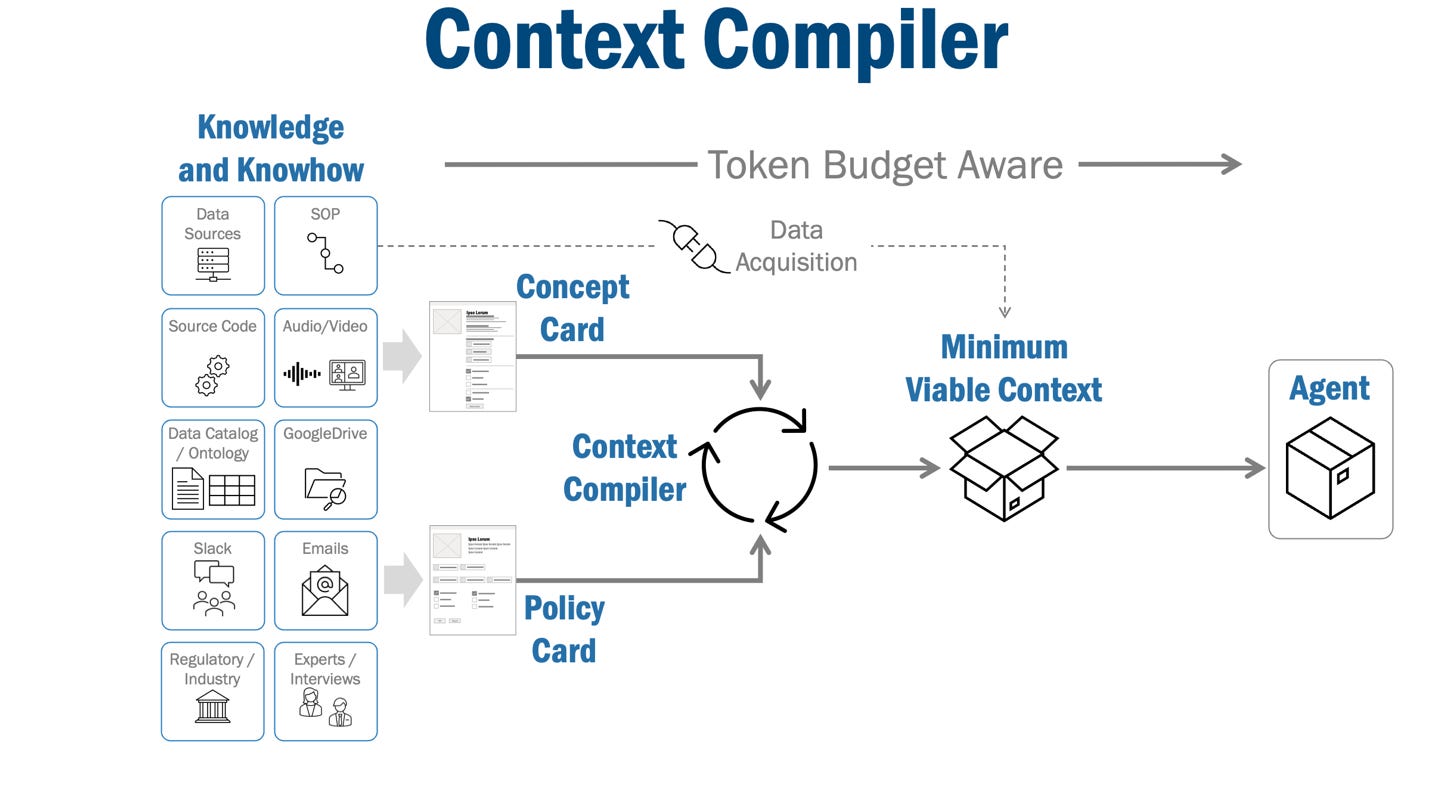

The context compiler is the runtime component that creates and executes a context plan to produce the Minimum Viable Context. The context plan is built from several inputs: the user request, available concept and policy cards, the current case state (jurisdiction, product, channel, lifecycle stage, risk tier), and a hard token budget for the context window.

Figure 3 – Context Compiler

The context plan is created by an LLM through decomposition of a user request into a directed acyclic graph (DAG) representing the steps and parameters to identify and gather data. The DAG conforms to a strict JSON schema so it can be validated and executed consistently (plus this helps the LLM create consistent and repeatable plans). A typical step in the DAG contains the concept and policy card IDs (plus sense), the data acquisition method (which system/tool to query), the expected output shape, and the maximum token allocation allowed for the result.

The context plan is bound to parameters extracted from the user request and case state. The LLM extracts (in the same step when the context plan is created) the values needed to run each step—customer identifiers, account numbers, dates, jurisdictions, product codes—and plugs them into the step’s parameter fields.

Importantly, the next step – execution of the plan – is deterministic. The compiler runs the steps in dependency order, calls the specified tools or data sources, and gathers results. Two constraints shape what is acquired: relevance (the step must support the next decision gate) and token budget. Note that the step has a token allocation and may be truncated, summarized, or skipped if it exceeds its cap or becomes unnecessary once other results resolve the decision.

After acquisition, the compiler packs the acquired data and policies into a context manifest that is first logged and then stuffed into the context window in a stable order. It combines the user request, the acquired data (bounded by per-step budgets), the pinned concept senses, and the relevant policy cards. Policy cards are included for their decision boundaries—thresholds, exceptions, overrides, regime scope, required inputs, and action bindings—so they constrain the model before it acts. The context manifest, in effect, becomes the audit record that explains what the agent was thinking, and how it decomposed and interpreted the user request into a working set filled into the context window.

The output of compilation is an MVC: the smallest set of meanings, constraints, and supporting data needed to make the next decision correctly under the hard token budget. “Stop” is an explicit rule: the compiler stops adding material once MVC criteria are met, rather than chasing top-k retrieval or adding background text “just in case.”

As in most cases, we stand on the shoulders of giants and re-purpose existing ideas: A useful way to think about our context compiler is to look at how a database executes a SQL query. A prompt is like an SQL query: it expresses intent, but it is not an execution strategy. The context plan is like a query execution plan: it decides which “tables” to consult (concept and policy inventories, systems of record), the join keys (sense bindings, identifiers), the filters (scope and eligibility), and the cost constraints (token budgets) so the result is correct and bounded.

Agent Exhaust and the Feedback Loop

Agent activity logs capture execution outcomes. But these logs contain information – sometimes explicit and often implied – about the decisions. Foundation Capital defines these logs as decision traces which structures “the exceptions, overrides, precedents, and cross-system context that currently live in Slack threads, deal desk conversations, escalation calls, and people’s heads.” They continue, where “rules tell an agent what should happen in general... decision traces capture what happened in this specific case (‘we used X definition, under policy v3.2, with a VP exception, based on precedent Z, and here’s what we changed’)”.

The key point is that the agent is the executor, and the exhaust from its execution is contains logs and information that form the basis for decision traces. However, what is needed is a way to decompose agent exhaust into “decision cards”. Foundation Capital goes on to state that “context graphs” are the mechanism to capture decision traces.

We think a more specific approach is available to us: we suggest starting with the context plan, a concrete structured artifact which, in effect, is a detailed description of the inputs to an agent execution, to frame and map the agent’s output. And by comparing the inputs to outputs, we have the building blocks for a feedback loop.

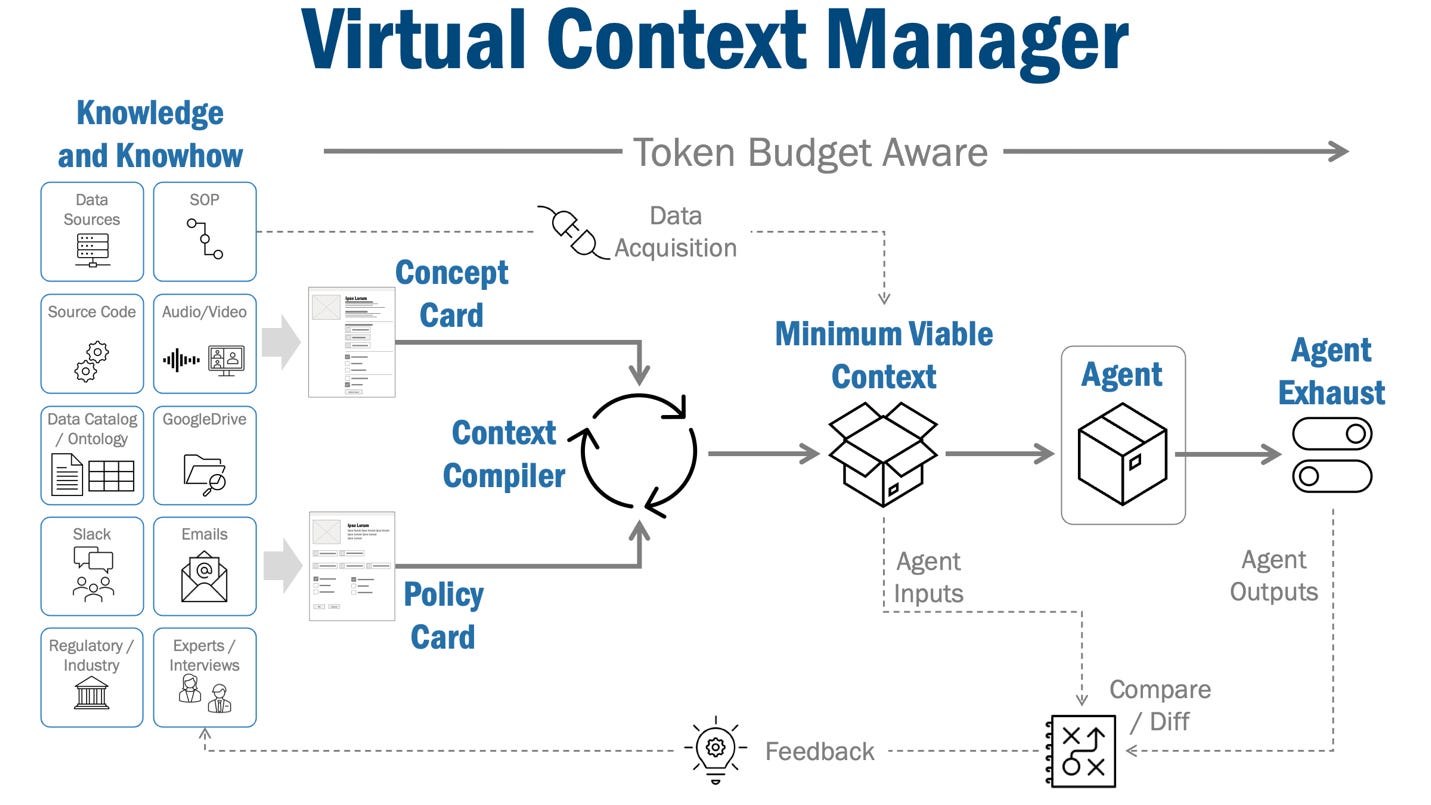

Putting it all together: The Virtual Context Manager

The Virtual Context Manager (VCM) manages the end-to-end context engineering process. Its goal is explicit and operational: deliver in a repeatable way a Minimum Viable Context (MVC)—the right context, at the right time, under the right token budget.

Figure 4 – Virtual Context Manager

At a high level, the VCM operates in several modes.

Background mode, where it manufactures and maintains the cards and the structures that make them governable. Card maintenance is a continuous distillation process. Once operational, the system detects ‘staleness’ via provenance links—triggering an automated refresh alert whenever a source document is updated, ensuring the card library evolves alongside the enterprise.

Online mode, where it compiles the working set – the MVC - for the next step by executing a plan in deterministic code: pin meanings, filter eligible policies, resolve conflicts by precedence, pack under budget, stop at MVC, or fault into verification/escalation when MVC cannot be reached safely.

Feedback mode, where the inputs MVC, composed of expected outcome and associated decision boundary, are combined with agent exhaust (logs, decision traces) that capture outcomes and decisions actually made, to create a before-after-optimize feedback loop.

Once again, an analogy helps our understanding: consider virtual memory in computers. In an operating system, the virtual memory manager (VMM) decides what must be resident in RAM now, what can remain on disk, and how to maintain a working set that lets the program make progress without constant stalls. In our case, the context window is scarce RAM, the corpus is disk, cards are the pages, and MVC is the working-set target. The VCM’s runtime component—the context compiler (VCC)—plays the role of the VMM: it decides which pages to map into the prompt for this step, under a hard budget, so the agent can execute without drifting or thrashing.

The Case for Explainability

Explainability is the ability to explain what an agent did, and why. It turns context loading into a transparent, logged process rather than an emergent side effect of similarity search. Each step produces an explicit context plan—structured, reviewable, and stored—showing what meanings were pinned, which policy regimes were considered eligible, what decision gates were expected, and what data bindings were required.

With explainability comes provenance. Provenance is made explicit in a concept or policy card by linking back to the original material it came from, down to the specific paragraph, table row, or ticket comment, in a specific version of the source.

And you can use explainability as the foundation for a compelling feedback loop: a concrete record of the agent’s execution path, mapped back to the context acquisition plan and the specific pages that were resident when each branch was taken. We think this is stronger than “observability” – rather, it is a reconciliation mechanism. You can see whether a bad outcome came from loading the wrong meaning page, selecting the wrong governing policy page, missing an exception, or failing to obtain required data before acting.

The Foundation for Trust

Trust is not a tone the model strikes; it is a property the system can prove. In a Virtual Context Manager, trust is the composition of four capabilities: provenance (where a constraint came from), explainability (what the agent did and why), measurement (how the system performs under budget), and feedback (how it improves when it fails). If any one of those is missing, you do not have a trustworthy agent—you have an improviser with better search. In this framing:

Trust = Provenance + Explainability + Measurement + Feedback

Provenance turns constraints into accountable artifacts. It lets the VCM choose between competing pages deterministically, detect when a page is stale, and page in evidence selectively when risk is high. Without provenance, policies degrade into “soft facts”: plausible today, unprovable tomorrow, and impossible to maintain as the corpus shifts. With it, the manager can treat policy pages as something closer to executable governance, not retrieved prose.

Explainability and measurement makes that governance operational. It produces transparent context plans for each prompt—the page table for what was mapped into working memory and why—then logs the agent’s execution against that mapped context.

Feedback closes the loop, and this is where trust becomes durable. Decision traces reconcile expected behavior (the plan) with actual behavior (the execution), and outcomes—human overrides, audit findings, reversals, downstream errors—become structured signals to refine future compilation. That is the hard-hitting claim: the VCM does not ask you to trust a black box; it gives you an audit trail, a set of counters, and a correction mechanism. Agents can act at scale only when trust is engineered as provenance + explainability + measurement + feedback, not asserted as a vibe.

Conclusion

The context window has become the scarce resource that determines whether agents behave like disciplined operators or confident improvisers. This article argued that the hard problem is not “better retrieval,” but memory management under a token budget: pin meaning, load only governing policies and constraints, to create a Minimum Viable Context by delivering the right context, at the right time, at the right token budget.

***

This article was written in collaboration with John Miller. Feel free to reach out and connect with the authors - Eric Broda and John Miller on LinkedIn or respond to this article. Questions and comments are welcome and encouraged!

Looking for more?

👉 Discover the full O’Reilly Agentic Mesh book by Eric Broda and Davis Broda

🎧 Follow co-hosts John Miller and Eric Broda on The Agentic Mesh Podcast on Youtube, Spotify and Apple Podcasts. A new video every week!

All images in this document except where otherwise noted have been created by Eric Broda. All icons used in the images are stock PowerPoint icons and/or are free from copyrights.

The opinions expressed in this article are that of the authors alone and do not necessarily reflect the views of our clients.

This is the most architecturally serious treatment of context engineering I've read — and it maps directly onto work I've been developing from a different direction.

Your Policy Cards are doing almost exactly what I've formalised as an Entitlement registry: decision boundaries, deontic operators (permit/obligate/prohibit), conditions, exceptions, and provenance tracing back to source documents. Your 'fault into verification/escalation when MVC cannot be reached safely' is what I've called ε₄ — state stochasticity triggering mandatory escalation. The SQL query analogy for context compilation maps onto the same instinct that led me to a decision table for governance evaluation.

The difference I'd point to is the evaluation layer. MVC gets the right policies into the context window — which is essential and your treatment of it is rigorous. What I've been working on is what happens at the output gate: a deterministic external kernel that evaluates transition validity independently of the LLM's reasoning over whatever it was given. The agent proposes; the kernel decides. The governance is outside the inference process entirely.

In architectural terms: MVC is the input layer, the governance kernel is the output gate. Neither alone is sufficient. Together they might be.

I'm publishing a formal paper on the governance kernel side — would genuinely value your eyes on it given how directly the two bodies of work connect. Happy to share a draft.