Enterprise Agents or Coding Agents – What is the Difference?

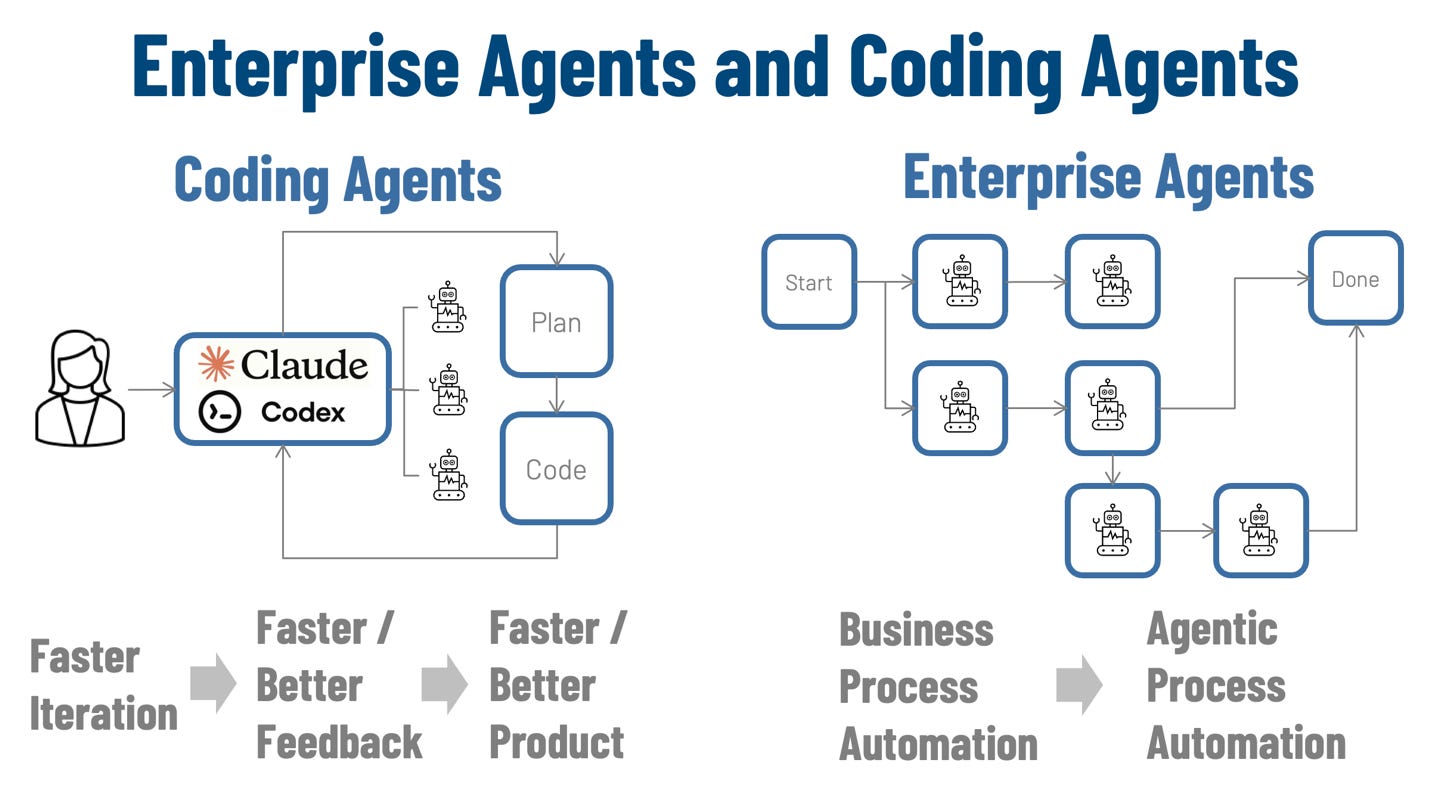

Coding agents have compressed the plan–build–test loop and made iteration dramatically faster and cheaper. The harder question is how to bring integrate Enterprise Agents – agents embedded in business processes – and apply the lessons from coding agents to accelerate business processes.

Introduction

On Feb 2, 2025, Andrej Karpathy tweeted: “There’s a new kind of coding I call “vibe coding”, where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It’s possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good.”

That tweet captured a moment: a new, faster way of building software had become visible to everyone at once. What began as an improvisational style of prompting quickly matured into something more durable: coding agents. Today’s coding agents are integrated development tools that can read and modify large codebases, run tests, follow repository conventions, explain diffs, and operate inside the same feedback loops that make professional engineering work reliable.

Coding agents are changing day-to-day software engineering by shortening time-to-feedback and lowering the cost of iteration, and they’re quickly becoming part of the standard enterprise development toolchain. But enterprise agents are different: coding agents live inside an assistive loop with tight feedback (tests, review, rollback), while enterprise agents operate inside systems-of-record workflows where actions must be permissioned, auditable, and defensible, so scale, governance, security, and reliability become first-order requirements.

Coding Agents Supercharge Software Engineering

A year ago, it was already normal to ask an assistant for help. Now a different posture has emerged in the most advanced software engineering shops: developers increasingly iterate between planning and specification in English, then use coding agents to build code and initiate testing, run what was produced, and iterate (including going back to restating specifications in the plan and reviewing code based with this new knowledge and trying again). When it works, and it often does, it feels like design and implementation collapsing into the same tight loop iterating faster than human comprehension of the code produced!

The tangible benefit is speed: faster iterations, shorter time to feedback, and less friction moving from an idea to a software product. This is especially powerful at the start of a project, where the bottleneck is usually inertia. Tools such as Claude Code from Anthropic or OpenAI’s Codex push this further by giving developers a terminal-native agent that can edit files, run tests, and chain tool calls, making it plausible for a small team to ship a surprisingly capable prototype quickly. Case in point, 100% of Claude CoWork was created using Claude Code.

A common coding-agent pattern looks like this: a small team, bounded scope, and an iterative loop driven by a spec. The developer states a goal in model-friendly terms, requests an implementation, runs tests, refines the spec, and repeats. The codebase itself serves as a coherent corpus; the “right” code segments become the context. This works because artifacts are versioned, tests define success, and a human adjudicates trade-offs. The result: less time spent on syntax and scaffolding, and more on shaping behavior, product boundaries, and user outcomes.

Growing Pains are Real, but Rapidly Getting Better

Like all powerful tools, coding agents come with risks. The most visible one is the temptation to accept output that “looks right” without doing the work of understanding it. That risk shows up in many forms, including low-quality or overly verbose changes that clutter a codebase, pull requests that read like machine-generated filler, and small correctness issues that slip past casual review. You can think of this as a modern version of an old problem: copying code you don’t fully understand, now at higher volume and speed.

The key point is that these are growing pains, not indictments. They are the predictable side effects of a step-change in leverage. In experienced hands, coding agents amplify judgment: they compress time spent on routine work and expand time available for architecture, careful review, testing strategy, and design. In inexperienced hands, they can amplify overconfidence. That difference is not new to software; it is simply more pronounced when the tool can produce a lot of plausible output quickly.

This is why enterprises should not treat coding agents as a casual add-on. They should treat them as a capability that requires training, norms, and process. The goal is not to slow teams down; it is to make sure speed compounds rather than decays into maintenance debt.

Apprenticeship, Updated for the Agent Era

For enterprises, we think the right posture is adoption with structure. Coding agents absolutely belong in the toolchain, but the developer operating model needs to evolve around them. The most practical model is apprenticeship-like training for enterprise developers: explicit progression from assisted work to independent judgment, with review gates, shared standards, and clear expectations about verification.

In an apprenticeship model, early-career developers use coding agents as scaffolding while they learn the craft: reading code, tracing execution, understanding failure modes, and writing tests that genuinely constrain behavior. More senior developers model what “good” looks like in the agent era: using agents to accelerate exploration, insisting on small and reviewable diffs, demanding tests that encode intent, and treating unclear changes as a prompt to ask better questions rather than merge faster.

This training is all about professional software engineering discipline: matching existing engineering patterns to appropriate challenges, the right architectural abstractions on key components, clarity of requirements, testing harnesses and test-driven design, code review norms (and agentic assistance). Done well, enterprises get real productivity gains.

From Coding Agents to Enterprise Agents

If coding agents can accelerate software engineering dramatically, why not apply the same lessons to enterprise agents capable of business operations? Why shouldn’t a bank, insurer, or logistics accelerate operations? The opportunity is real.

Coding agents operate in a world where the primary artifacts are code, tests, and version control. Enterprise agents operate in a world where the primary artifacts are use cases, actions, policy, exceptions, and transparency. Businesses don’t operate with a code repository; it is scattered across policies, SOPs, training materials, ticket comments, emails, tacit knowledge, and lived experience. The success criterion is rarely as crisp as “tests pass.”

A coding agent’s “truth” is largely internal: does the code compile, do tests pass, does the UI behave, does the benchmark improve. Enterprise work is different. The “truth” is external and often adversarial: regulations, audits, contracts, counterparties, fraud, operational risk, reputational risk, and the simple fact that systems frequently disagree. Processes may run for weeks across teams and time zones, with exceptions that are not edge cases but the real work.

Importantly, none of this diminishes coding agents. It clarifies why the enterprise case requires additional engineering and governance. Coding agents can be extraordinarily reliable within their domain precisely because the domain has strong feedback loops and tight control of artifacts. Enterprise operations require building similarly strong loops, but around different primitives: transparent audit trails, evidence, policy, escalation, and approvals.

Introducing Enterprise Agents – A Different Type of Agent

Enterprise systems coming to the practical realization that agents are becoming true participants in end-to-end business processes, where multiple agents coordinate across steps, systems, and act to move work from initiation to completion. The distinction matters because the architecture and trust requirements are not the same.

Figure 1: Enterprise Agents and Coding Agents

Enterprise agents operate on a different surface area. They participate across multi-step business workflows that begin with an initiating event and end with an outcome that matters outside the developer toolchain. Software engineers would know this as the business process automation where business users and software designers describe multiple system components coordinate to hand work over and move through a work order, case file, through a sequence of steps and actions. The promise is compelling: compressing cycle time, reducing manual handoffs, and sustaining execution. That shift turns “agent actions” as process steps,” and raises the stakes.

What is Different for Enterprise Agents

That difference explains why enterprises will increasingly need specific governance to trust agents. One such example is a “Know Your Agent” (KYA) discipline. When agents become actors in operational processes, the primary risks are unauthorized tool use, hidden delegation, data misuse, and policy violations that are difficult to unwind after the fact. Trust must be engineered into the system: clear identity, explicit authorization boundaries, transparent purpose and methods, and traceability strong enough to survive audits and incidents. The practical claim is simple—agentic process automation only scales when accountability scales with it.

Still, enterprises should adopt the speed-and-iteration culture that coding agents have made practical, but they must transplant it carefully.

Consider enterprise agent architecture.

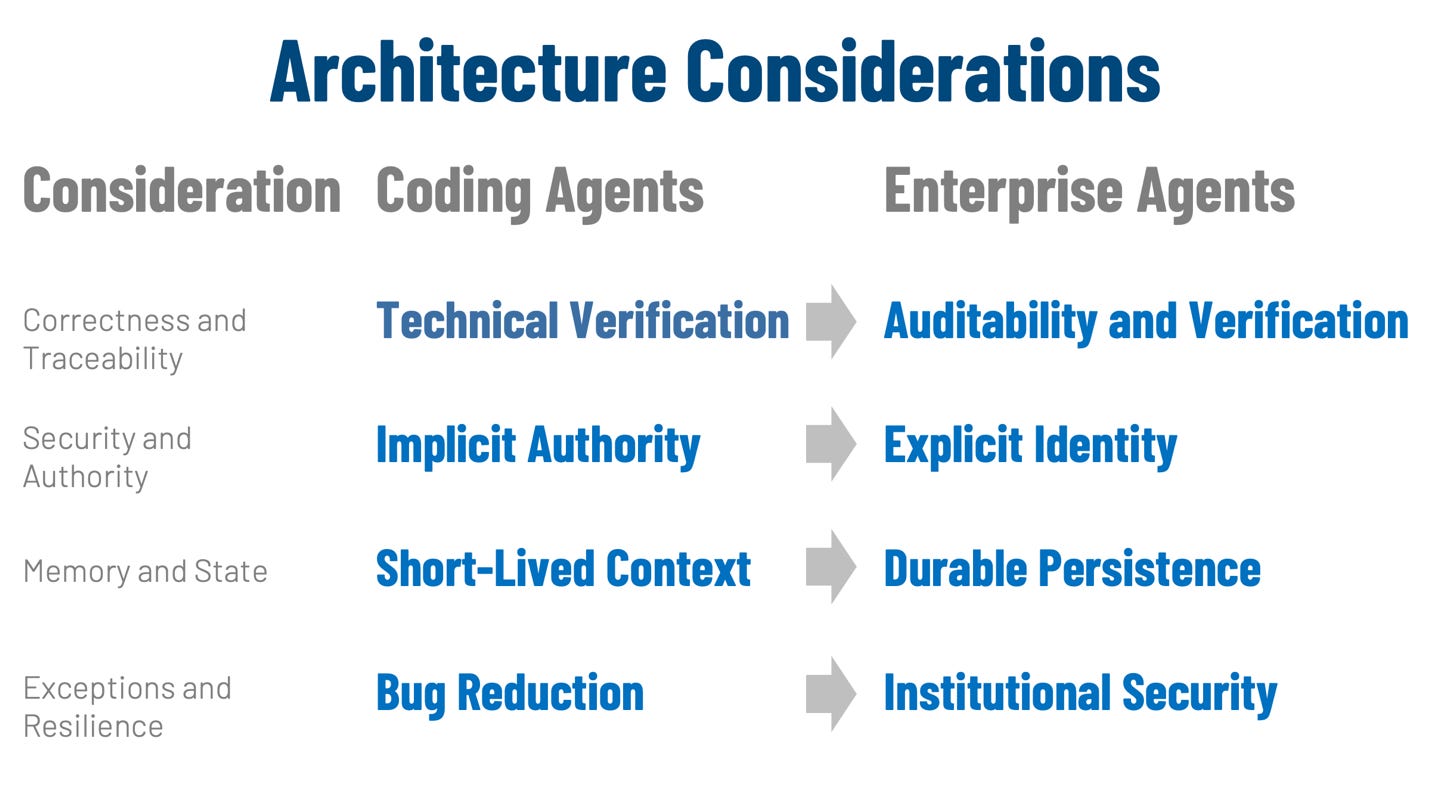

Enterprise agents differ from coding agents, which introduces distinct architectural considerations.

Figure 2: Architecture Considerations

Correctness, Observability, and Traceability

For coding agents, correctness is primarily a technical verification: does the resulting program behave as intended under review and testing? In this context, developers often measure success through metrics related to velocity and agility.

Enterprise agents shift the focus to socio-technical correctness, where every action must comply with corporate policy and satisfy rigorous controls. In operations, “feeling fast” is secondary to producing a defensible record that includes the decision, evidence, rationale, and a full audit trail. Success is measured by operational outcomes like cycle time, error rates, and audit findings to ensure that speed does not lead to downstream rework.

Security, Identity, and Authority

A coding agent generally operates as a personal assistant under the developer’s implicit authority within a sandboxed or local environment. This “human-in-the-loop” model allows for rapid iteration because the security perimeter is often restricted to the developer’s immediate workspace and repository.

In contrast, enterprise agents must possess an explicit identity with scoped permissions and a clear delegation model. They integrate directly with institutional security frameworks, such as Role-Based Access Control (RBAC) and compliance gating, to manage actions across multiple platforms. In the enterprise, authorization is not an optional add-on; it is a foundational architectural component that defines what actions are possible.

Memory, Long Conversations, and State Management

Coding agents rely on short-lived tasks and a coherent local repository to maintain context. They typically operate in tight cycles—ranging from minutes to hours—where the developer is constantly present to provide feedback and reconstruct state as needed.

Enterprise agents require durable, queryable memory that persists through long-running workflows lasting days or weeks. This “context engineering” must track not only what happened, but why it happened, who approved it, and which policies applied. Without this persistent state management, an agent becomes a liability that cannot reliably pick up where it left off in a complex business process.

Exception Handling and Resilience

In software projects, coding agents treat exceptions as bugs to be reduced or fixed through iterative re-prompting and manual tweaks. The goal is to reach a “happy path” where the code functions correctly within a limited, repo-centric scope.

For enterprise agents, exceptions are a standard part of the work because reality rarely fits a perfect process. These agents must treat exceptions as first-class paths, utilizing production-grade resilience patterns like circuit breakers and exponential backoff to detect errors, route them for evidence, and know exactly when to escalate. This architecture ensures transactional integrity, allowing the system to roll back safely if a single step in a complex workflow fails.

What Can Enterprise Agents Learn from Coding Agents

If coding agents are here to stay—and all signs point that way—then it is in an enterprise’s interest to adopt them deliberately and capture the upside. The basic math is hard to ignore when teams that shorten iteration cycles ship more, learn faster, and allocate scarce senior attention to higher-leverage work. The risk is not that competitors will adopt coding agents; it is that they will learn how to operationalize them—turning individual productivity gains into organizational advantage—while slower firms keep treating them as optional experiments.

One lesson transfers cleanly from software to business operations: spec-driven design is coming back, and the “plan” is becoming as important as the “code.” In the agent era, the spec is no longer a static requirements document written once and promptly outdated. It can be a living artifact that an LLM helps draft, challenge, clarify, and continuously refine. That matters because most project failures are not caused by bad syntax; they are caused by unclear intent, missing constraints, unstated assumptions, and ambiguous definitions of “done.” Helping with the spec—tightening scope, enumerating edge cases, writing acceptance criteria—may prove more valuable than helping with implementation, because it makes every downstream artifact easier to build and safer to change.

This is where the uncomfortable but accurate line lands. A colleague stated it like this: “You will eventually move faster than you can verify code as a human.” Coding agents compress the act of producing plausible code to near-zero marginal cost, which means the bottleneck shifts to verification: review, testing, and evidence that the change does what it claims without breaking what it shouldn’t. Enterprises should treat that quote as a design constraint. If verification cannot keep up, the organization will accumulate hidden risk, even if velocity looks strong. The only sustainable response is to invest in verification as an engineering capability—strong tests, tighter interfaces, clearer invariants, better observability, and a culture that treats “proof of correctness” (in practical, testable forms) as part of the deliverable rather than an optional afterthought.

This is where the diligence in creating a sound and detailed specification (with coding agent help, of course) pays dividends. Simply put, the specification becomes the bridge between speed and verification.

Conclusion

Coding agents are the future of software engineering. They are already delivering real productivity gains, and enterprises will increasingly treat them as part of the standard engineering stack. The right enterprise posture is to capture the upside while institutionalizing the practices that make speed safe: small diffs, strong review norms, test discipline, and apprenticeship-like training that turns agent leverage into durable engineering judgment.

For enterprise agents, the advice is parallel but broader. Borrow the iteration culture of coding agents and use coding agents aggressively to build the scaffolding of agentic process automation. At the same time, recognize that enterprise agents must be designed as business operators inside a controlled system: transparency, identity, policy, exception handling, durable memory, replayability, traceability, and collaboration are not optional features; they are the product. If you get those right, you can bring the capabilities of modern agentic tooling into the enterprise—without turning speed into operational risk.

***

This article was written in collaboration with John Miller. Feel free to reach out and connect with the authors - Eric Broda and John Miller on LinkedIn or respond to this article. Questions and comments are welcome and encouraged!

Looking for more?

👉 Discover the full O’Reilly Agentic Mesh book by Eric Broda and Davis Broda

🎧 Follow co-hostsJohn Miller andEric Broda on The Agentic Mesh Podcast on Youtube, Spotify and Apple Podcasts. A new video every week!

All images in this document except where otherwise noted have been created by Eric Broda. All icons used in the images are stock PowerPoint icons and/or are free from copyrights.

The opinions expressed in this article are that of the authors alone and do not necessarily reflect the views of our clients.

I have a question about enterprise agents. When these are created and are supposed to be part of an agent ecosystem, how was that like with your clients: Were these created by the business departments or by engineers? If engineers do it, they have to do it together with the business department, right? I have been wondering what kind of collaboration in your opinion worked best when creating enterprise agents that perform operational tasks. What team composition worked well and what didn't work well? And who "owns" the enterprise agent at the end? IT or business? I see a target picture in my mind's eye but I wonder how companies do it when IT and business are separate departments

The governance gap between coding agents and enterprise agents is real. I've seen this firsthand - my coding agent can make autonomous decisions about code commits because the blast radius is small and reversible. But when it touches anything customer-facing or involves real money, the rules change completely.

One thing I'd add: the biggest bottleneck isn't the governance framework itself. It's the handoff point between autonomous work and human review. Getting that boundary wrong in either direction (too loose or too strict) kills the whole value proposition.

The identity management point especially resonates - knowing which agent did what and why is harder than it sounds when you have multiple agents collaborating on the same task.