Agentic Process Automation – The Agentic Knowledge Fabric

The Agentic Knowledge Fabric is a runtime knowledge layer that captures enterprise knowledge from documents, systems, processes, policies, and expert judgment, then serves the minimum viable context required for effective and token-aware agent operations.

Introduction

Agentic Process Automation has agents participating directly in business processes, making step-level decisions, interpreting mixed inputs, coordinating across systems, and operating within policy and control boundaries. That shift matters because many enterprise processes now depend on judgment over documents, messages, exceptions, thresholds, and domain rules that do not fit cleanly into deterministic flow logic.

When an agent executes one of those enterprise steps, output quality depends on whether it receives the right context for that specific task: the right subset of enterprise knowledge, containing the correct definitions, policy constraints, exceptions, and decision thresholds. In most enterprises, that context is fragmented across policy manuals, standard operating procedures, source systems, regulatory texts, tickets, emails, and human judgment. As a result, the knowledge needed for a single decision step is rarely assembled in a form that is complete, scoped, and usable at execution time.

The Agentic Knowledge Fabric (AKF) is the knowledge foundation for Agentic Process Automation (APA). It addresses that operating gap by converting fragmented enterprise knowledge into bounded context artifacts that can be retrieved and assembled under explicit context limits.

AKF is built on an engineering premise: context should be treated as a product with predictable size, stable identifiers, provenance, and deterministic assembly. That premise drives both the logical architecture—how meaning is represented, indexed, linked, and selected—and the operational pipeline that builds and maintains those representations.

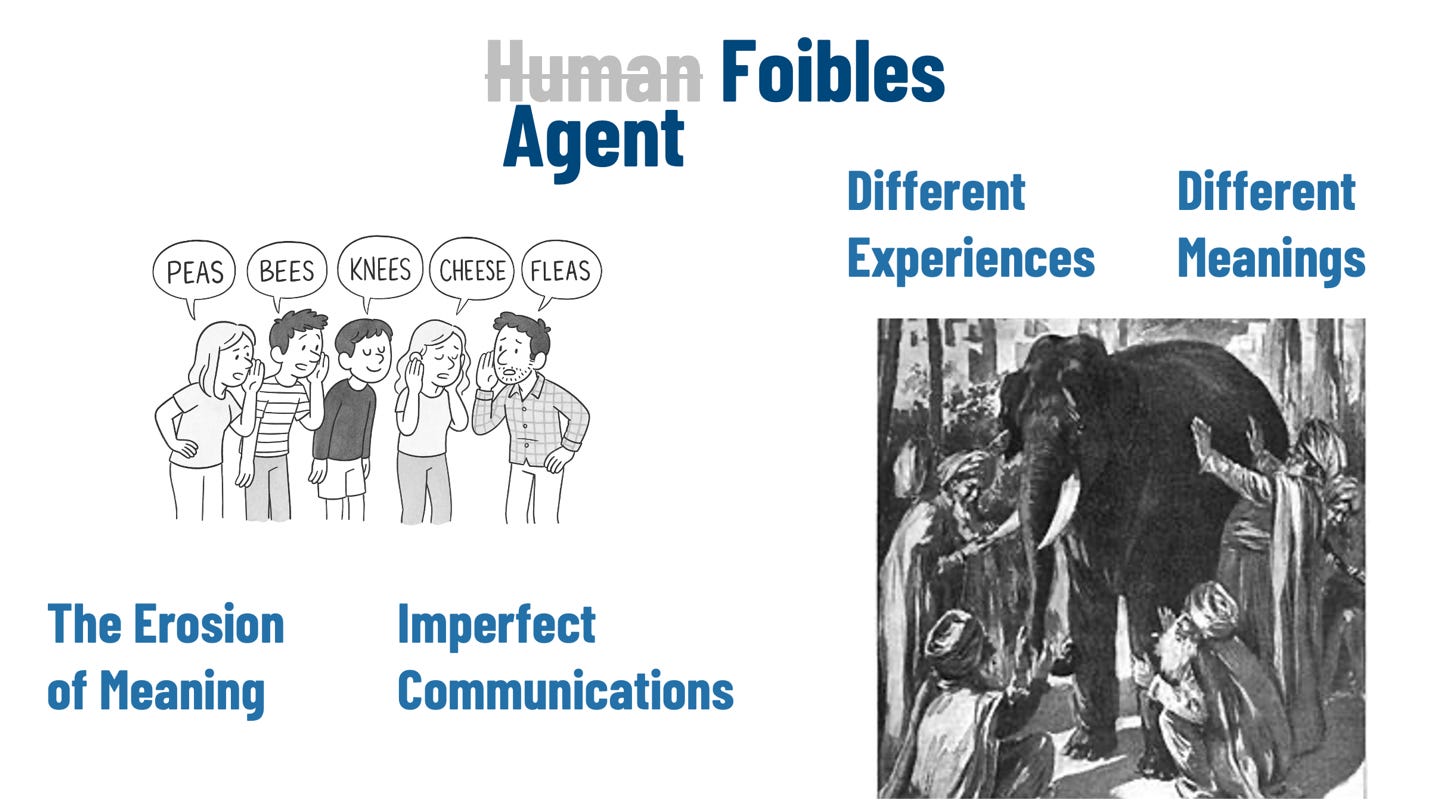

Collaboration Challenges

The parable of the blind men and the elephant captures the most concrete failure mode for agent execution: different teams experience the same enterprise object through different interfaces and therefore form different meanings. A “customer” in marketing is an audience member; in underwriting it is an applicant; in servicing it is an account holder; in AML it is a regulated subject tied to beneficial ownership and risk controls. Each view is locally valid because each team touches a different part of the operational system through different stages and obligations. The system-level gap is that most enterprises do not represent those meanings as explicit, scoped senses with enforceable boundaries.

Figure 1, Knowledge Foibles

The “broken telephone” dynamic describes how meaning erodes as knowledge moves through delivery layers. A policy intent begins in a control owner’s language, then becomes a requirement, then a user story, then an implementation, then a runbook, then a ticket template, then a data field label. At each handoff, qualifiers and exceptions are dropped because they are costly to encode, hard to test, or treated as edge cases. The resulting artifacts can remain internally consistent—tables exist, fields are populated, and SOPs read plausibly—while the operational meaning has shifted. For agents, that shift becomes a missing decision boundary.

Imperfect communications amplify the same failure even when intent does not degrade in a single linear chain. Large enterprises coordinate through partial artifacts: email threads, Slack snippets, meeting notes, tickets, internal wikis, and undocumented tribal knowledge. These channels support speed and local coordination. They rarely preserve replayable decision logic, applicability conditions, or provenance suitable for audit. Humans compensate with shared context and ad hoc clarification. Agents require a substrate that makes those constraints explicit and retrievable at the decision step.

When these failures combine—meaning drift across handoffs and multiple valid meanings across domains—the enterprise produces knowledge artifacts that are difficult to use for agent execution. Similarity search can surface relevant passages, but it does not consistently bind the correct term sense, the governing exception, or the controlling threshold for the current task step. Agents then operate with either too much context that dilutes constraints or too little context that omits them. AKF is motivated by a direct requirement: agents need runtime-delivered meaning that is disambiguated, scoped, policy-bound, and packaged to the decision step.

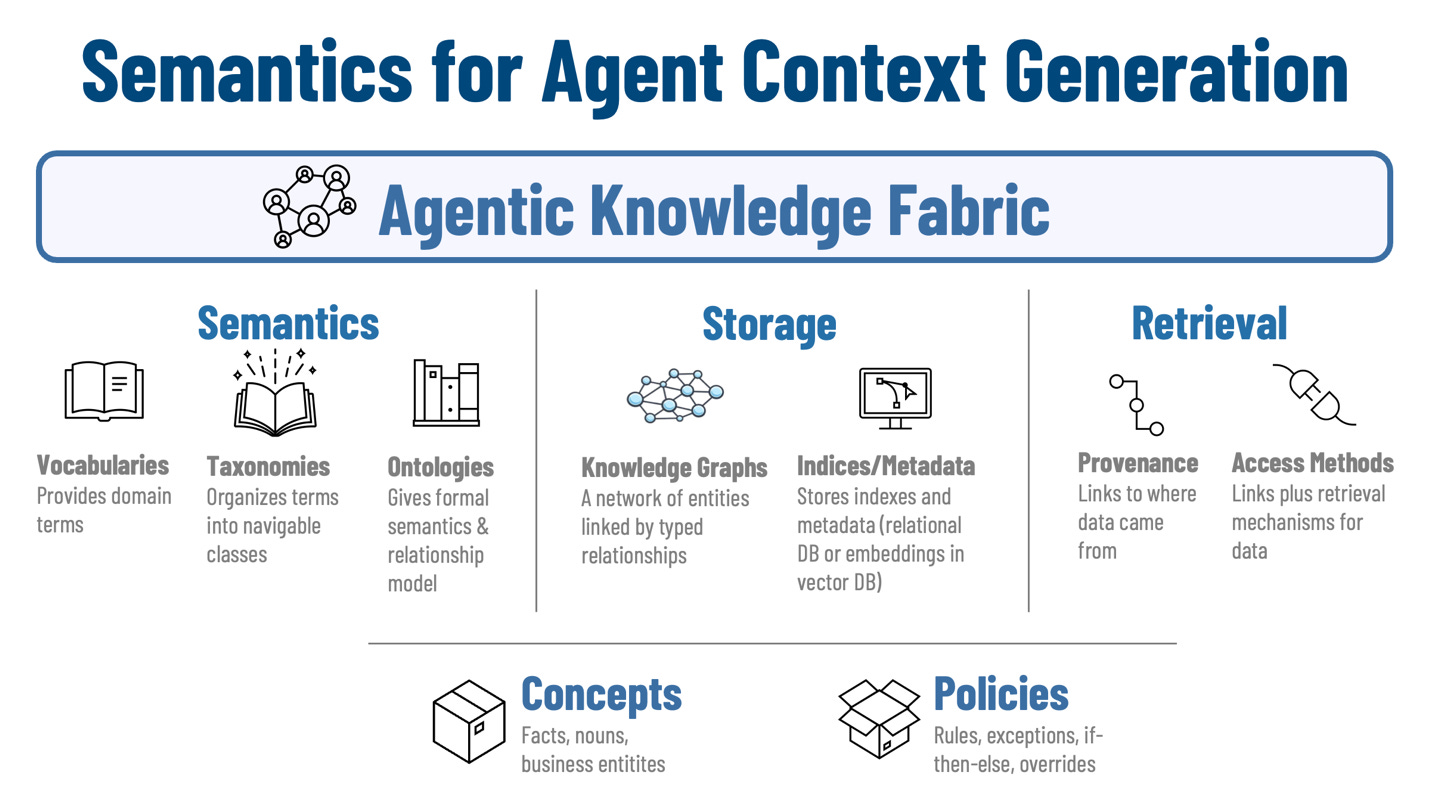

Agentic Knowledge Fabric: Semantics Optimized for Agent Context Generation

AKF assembles runtime context through four mechanisms: semantics, storage, retrieval, and a core foundation of concept and policy artifacts. Together, these mechanisms define the logical architecture for how enterprise meaning is represented, indexed, linked, and selected.

Figure 2, Semantics for Agent Context Generation

Semantics

Semantics reduces ambiguity before retrieval and packing begin. The semantic layer supports step-scoped context assembly with stable references and predictable structure.

We use vocabularies are operational dictionaries tied to enterprise usage: the terms found in UI labels, tickets, logs, data fields, emails, and SOPs. Each term is normalized to a stable identifier. When the enterprise uses the same word in multiple domains, vocabulary entries are bound to explicit scoped senses, such as “customer:marketing” and “customer:aml.” Those scoped senses become retrieval filters, which prevents cross-domain leakage when an agent request uses a term that has multiple meanings.

Taxonomies in AKF are organized as retrieval facets aligned to decision variance. Common facets include jurisdiction, product family, process stage, risk tier, and control category because those dimensions often drive different thresholds, exception paths, and approval requirements. Their role is practical: they narrow the candidate set early so later stages can focus on the most relevant policy and evidence fragments.

And we keep ontologies shallow and task-directed. They focus on typed links that support context computation and traversal. This makes it possible to traverse from a task object to the controlling constraints without building a deep enterprise-wide semantic model that is expensive to maintain and difficult to operate.

Storage

Storage supports heterogeneous sources and runtime assembly. It holds representations at multiple granularities so the context server can compose a small, decision-ready package instead of forwarding whole documents.

Knowledge graphs store entity networks and typed links aligned to the semantic layer. Their role is navigational: they support targeted hops from a task object to governing policies, required approvals, dependent entities, and authoritative sources. That improves retrieval precision and reduces the amount of candidate text that must later be ranked and packed.

Indices and metadata support deterministic filtering and ranking. Relational metadata captures constraints such as business unit, system of record, policy jurisdiction, effective date, approval status, and ownership. Embeddings (in vector databases) support similarity ranking when queries are partial or sources are unstructured. Metadata (in relational databases) bounds the candidate set; embeddings help order the remaining items.

Content is stored in retrieval-sized units – concept and policy cards described later – that are designed for assembly. Typical units include policy clauses, decision tables, exception definitions, SOP fragments tied to a tool action, and concept attribute blocks that an agent can cite. Each unit carries stable identifiers, provenance pointers, and governance metadata so a context package can be audited and reproduced.

Retrieval

Retrieval produces a context package. It begins with intent classification, entity binding, vocabulary expansion, and scope reduction through taxonomy facets and metadata filters. From there, the system traverses the graph to locate governing constraints and ranks candidate fragments using relevance and freshness signals.

The retrieval stage is where the mechanisms described earlier begin to operate together. Semantics constrains the meaning of the request, storage provides the candidate units and links, and retrieval assembles those inputs into an ordered set of fragments suitable for serving.

Provenance is returned with each retrieved fragment as operational metadata. It includes origin pointers to actual data and corpus documents, versioning and effective dates, and the selection path used to retrieve the fragment. Provenance supports not only traditional audit and lineage needs but also improves runtime behavior by allowing the server to favor authoritative, compact sources and to return the originating text when disagreements arise.

Concept and Policy Cards

Concept cards represent the stable entities and facts required for execution: customers, accounts, products, controls, cases, claims, shipments, vendors, thresholds, and their canonical identifiers. They keep those entities consistent across systems and representations so a request can bind to the correct object and then retrieve the relevant attributes and relationships for the current step.

Policy cards represent decision boundaries: rules, exceptions, overrides, approval thresholds, escalation conditions, and conflict-resolution precedence. They are structured with explicit applicability criteria so the server can select the governing logic for a task step directly, without scanning broad narrative policy text.

Token Budgets and Context Packing

The context window is the scarcest resource in the agent landscape, so correctly assembling – or packing – the context window is a first-class problem. Context packing in AKF is implemented as deterministic allocation and ordering. The context server starts with a target size for each request and allocates portions of that space to block types. A common allocation reserves capacity for request framing and entity bindings, assigns the largest share to governing policy and decision logic, and uses the remainder for supporting evidence and provenance pointers.

Packing proceeds in priority order with explicit cut lines. Mandatory blocks are packed first: identifiers for bound entities, governing policy clauses, required approvals, and any disqualifying exclusions relevant to the step. Optional blocks are added next: exceptions, decision tables, thresholds, tool-specific SOP fragments, and supporting evidence. When the package exceeds the target size, the server applies stable reduction rules, such as removing examples before rules, commentary before decision tables, and secondary evidence before controlling clauses. The result is a context package whose shape remains stable across runs for the same request type.

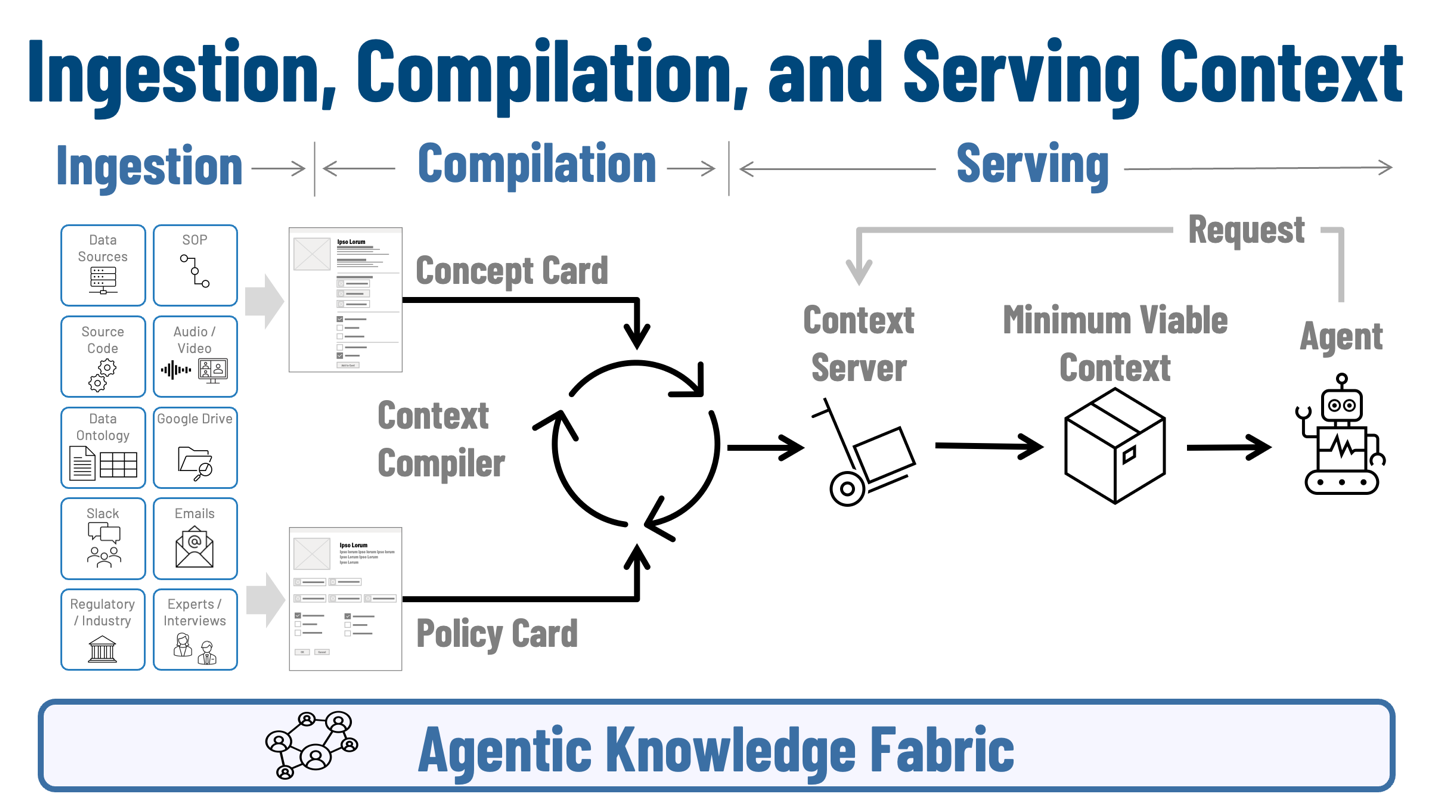

Agentic Knowledge Fabric: Ingesting, Compiling, and Serving the Agent Context Window

The logical architecture described earlier explains how meaning is represented, stored, and selected. This section turns to the operating flow that makes those capabilities usable in practice. AKF works through three stages: ingestion, compilation, and serving. Ingestion finds and prepares enterprise knowledge from structured, unstructured, and multi-modal sources. Compilation transforms that material into concept cards, policy cards, and minimum viable context artifacts that can be indexed, linked, and maintained. Serving takes an incoming agent request and assembles the relevant context into a bounded package that is ready for execution. Together, these three stages explain how fragmented enterprise knowledge becomes step-specific agent context.

Figure 3, Ingestion, Compilation, and Serving Context

Ingestion

Ingestion begins with discovery: identifying authoritative knowledge sources and classifying them by structure, ownership, access constraints, and change rate. It covers operational systems and curated datasets. It also includes process assets such as SOPs, runbooks, decision playbooks, tickets, and internal guidance because those materials often contain boundary conditions and exception handling absent from transactional records.

Structured ingestion covers relational tables, event streams, logs, and curated datasets. The mechanical work is entity and field alignment: mapping records to concept identifiers, attaching governance metadata such as system of record, lineage, and effective dates, and preserving enough schema semantics for deterministic filtering. This allows structured records to serve as compact grounding evidence without forcing an agent to interpret raw schemas inside the context window.

Unstructured ingestion covers documents and artifacts such as PDFs, internal wikis, tickets, emails, and file shares. The engineering task is segmentation into retrieval-sized units with stable references to source location and version. Segmentation follows downstream decision needs, so units align to decision boundaries, tool actions, and concept attributes instead of arbitrary chunk boundaries.

Multi-modal ingestion adds transcription and extraction for audio and video. The pipeline produces time-coded transcripts, extracted decisions and action items, and references to the underlying media segment. This matters because many operational decisions are communicated verbally, and replayable provenance often requires returning the exact segment that supported a decision.

Tacit knowledge capture is treated as an ingestion source with a repeatable method. Expert interviews, incident reviews, and operator walkthroughs are converted into structured artifacts that preserve decision rationale, recurring exception patterns, and boundary conditions. Each artifact is linked to concepts and policy boundaries and stamped with provenance so it can be retrieved and served like any other unit.

Compilation

Compilation transforms ingested material into the artifacts the fabric can actually use at runtime. In the updated flow, this stage centers on two compiled products—concept cards and policy cards—and on the compiler process that assembles and maintains them. The objective is to turn raw enterprise material into bounded, structured units that can later be selected and packaged for a specific agent step.

Concept cards capture the business entities and facts an agent may need to reference during execution. A concept card typically includes the concept identifier, scoped senses, synonyms, owning systems, key attributes, and relevant relationships. The card is organized so downstream stages can retrieve only the needed sections, such as identifiers, thresholds, related entities, or attribute blocks, without pulling the full card into context. In the updated diagram, the concept card is one of the two primary compiled artifacts feeding the compiler loop.

Policy cards capture decision logic in a similarly bounded form. A policy card contains rules, exceptions, overrides, thresholds, escalation requirements, applicability criteria, and precedence information, along with pointers to authoritative sources and versions. This turns long-form policy material into a retrieval-ready unit that can be selected directly for a task step. In the updated diagram, policy cards sit alongside concept cards as equal inputs into compilation, reflecting that runtime context depends on both business facts and governing constraints.

The Context Compiler resolves synonyms through the semantic layer, applies taxonomy facets, links entities and policies, versions cards, and maintains the relationships needed for later retrieval. Its role is iterative rather than one-time: as source material changes, the compiler updates cards, preserves structure, and keeps them aligned to the storage and retrieval model described earlier. The circular flow in the figure is useful here because compilation is not a simple pass-through stage; it is a continuing process of normalization, card generation, refinement, and maintenance.

Compilation also produces the specifications that later allow the serving layer to assemble Minimum Viable Context. For each task or process step, the system defines what sections of which concept cards and policy cards are required for correctness and audit. Those specifications are usually expressed through templates, then adjusted by heuristics and operational feedback. Templates are typically co-developed by process owners, domain SMEs, and platform teams because they encode the step-level view of what an agent must know before it can act.

A claims adjudication step makes this concrete. For that step, the compiled concept material may include claim identifier, loss type, claimed amount, timestamps, evidence pointers, policy identifier, and jurisdiction. The compiled policy material may include payout thresholds, applicable exclusions, and escalation rules tied to fraud indicators or manual review triggers. The point of compilation is not yet to serve those items to the agent; it is to express them as stable cards and card sections so the serving stage can later assemble the minimum viable context for that specific step with predictable structure and scope.

Serving

Serving begins when an agent issues a request for a specific task step. That request is the trigger for context assembly. The serving layer interprets the request, binds the relevant entities, identifies the task or process step, and determines which compiled concept and policy materials are applicable. It then uses the retrieval structures described earlier—semantic bindings, metadata constraints, and linked relationships—to locate the card sections and policy fragments that govern that step.

The context server takes the incoming request, selects the relevant step template, applies jurisdictional and process-specific filters, and retrieves the candidate concept and policy blocks needed for the task. From there, it assembles a minimum viable context package: a bounded set of materials that gives the agent the facts, rules, thresholds, exceptions, and provenance needed to act. The package is ordered deliberately so the most controlling items, such as governing rules or exclusions, appear ahead of supporting evidence and secondary context.

The output of serving is a step-specific context package prepared for direct agent use. Each block is returned with stable identifiers and provenance pointers so the agent can reason over the material, reference it explicitly, and support downstream audit or human review. The package is shaped according to the packing and cut-line rules defined earlier, which allows the server to preserve the most important decision material when context space is limited.

Serving also establishes a clear interaction pattern between the agent and the fabric. The first response gives the agent the minimum viable context for the current step. If the agent requires more detail, it can issue another request for specific blocks, such as the full text of an exclusion clause, the evidence behind a threshold, or the escalation rule associated with a manual review path. Because those blocks carry stable identifiers, the server can fulfill the request directly without reconstructing or resending the entire package.

This makes serving an active runtime function rather than a one-time delivery step. The server responds to the initial request, returns the smallest sufficient context package, and then supports bounded follow-on requests as the task unfolds. In this way, the serving layer provides a controlled interface between the compiled knowledge assets in the fabric and the agent that must use them during execution.

Comparisons with Alternatives

Traditional knowledge management systems are optimized for human discovery and long-form explanation. They are valuable repositories, but their dominant unit is the document, and their navigation assumes human readers who can skim, infer applicability, and reconcile conflicts. AKF shifts the unit of delivery to a packed context package composed of retrieval-sized fragments tied to concepts and decision boundaries, with deterministic assembly and provenance.

Enterprise ontology programs focus on conceptual consistency and semantic integration across systems. They can improve data interoperability, but they often expand in scope and depth until maintenance dominates, and they do not necessarily produce step-scoped decision artifacts that can be served during runtime execution. AKF uses shallow ontologies and typed links for traversal and applicability, while placing decision logic in explicit policy cards designed for retrieval and packing.

Plain RAG systems rely on similarity search over chunks and then expect the model to infer decision boundaries from retrieved text. Better chunking, metadata filters, and reranking can improve retrieval quality, and many teams should try those steps first. But those improvements still do not fully address scoped term senses, explicit policy applicability, or deterministic packing order under runtime constraints. AKF addresses those gaps through scoped senses in vocabularies, applicability facets in taxonomies and metadata, graph traversal to bind governing policies, and packing rules that place decision boundaries ahead of supporting narrative.

Conclusion

Agentic Process Automation depends on more than capable agents. It depends on whether each agent receives the correct business meaning for the step it is executing: the right definitions, thresholds, rules, exceptions, and decision boundaries, delivered in a form it can use at runtime. That is the role of the Agentic Knowledge Fabric. AKF provides the knowledge foundation for APA by turning fragmented enterprise knowledge into compiled, traceable, step-specific context that can support execution inside real process and control constraints.

This is the practical link between the two ideas. APA defines a new operating model in which agents participate directly in enterprise processes. AKF supplies the context infrastructure that makes that participation dependable. Enterprises will make the shift from isolated agent pilots to durable process automation when they treat context delivery with the same engineering discipline applied to systems, controls, and data pipelines. The path usually starts with one bounded process stage, a small set of governing concepts and policy cards, and instrumentation that shows whether the delivered minimum viable context is sufficient under real operating conditions. That is often enough to determine whether an organization is experimenting with agents around the edges of a process, or building the knowledge substrate required for APA to work at scale.

Looking for more?

👉 Discover the full O’Reilly Agentic Mesh book by Eric Broda and Davis Broda

🎧 Follow co-hosts John Miller and Eric Broda on The Agentic Mesh Podcast on Youtube, Spotify and Apple Podcasts. A new video every week!

***

This article was written in collaboration with John Miller. Feel free to reach out and connect with the authors - Eric Broda and John Miller on LinkedIn. Questions and comments are welcome and encouraged!

***

This is part of larger article that addresses a broader suite of topics related to agents (see my full article list). If you like this article, you may wish to checkout an upcoming book, “Agentic Mesh”, with O’Reilly, and soon on Amazon.

***

All images in this document except where otherwise noted have been created by Eric Broda. All icons used in the images are stock PowerPoint icons and/or are free from copyrights.

The opinions expressed in this article are that of the authors alone and do not necessarily reflect the views of our clients.